NLPIR SEMINAR 24th ISSUE COMPLETED

Last Monday, Zhaoyang Wang gave a presentation about the paper, End-to-end Sequence Labeling via Bi-directional LSTM-CNNs-CRF, and shared some opinion on it.

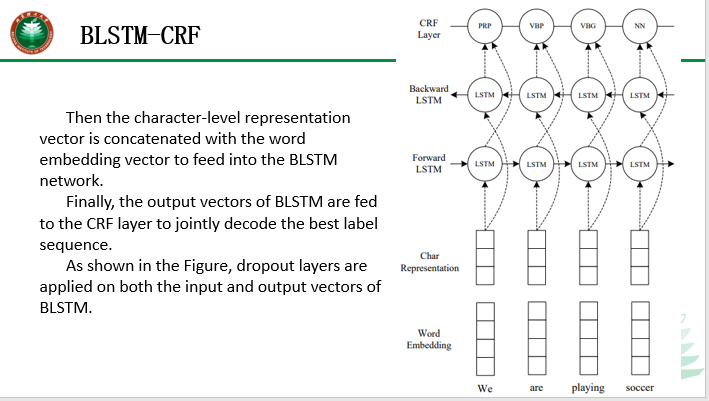

In the picture, dashed arrows indicate dropout layers applied on both the input and output vectors of BLSTM. One is that a dropout layer applied before character embeddings are input to CNN. Another is that dropout layers are applied on both the input and output vectors of BLSTM. The dropout layers can reduce overfitting.

The LSTM-CNN-CRF model can also be applied to ancient Chinese sentence tagging.

页面: 1 2